Large Language Model Training Infrastructure Requirements & Solutions

Our Supercomputing Center is at the forefront of computational infrastructure design, employing a sophisticated model that integrates high-density blade assemblies and advanced liquid cooling technology. This approach, which includes a pioneering immersion cooling design, centralized power distribution, and comprehensive resource management, is strategically crafted to surpass the limitations associated with conventional deployment strategies. By enhancing the deployment density of computing units and facilitating both central and edge distributed deployment, we ensure our infrastructure meets critical design criteria — exceptional energy efficiency, superior performance, unwavering reliability, optimal availability, and extensive scalability. This holistic and forward-thinking design philosophy positions our supercomputing center as a beacon of innovation and efficiency in the computational field.

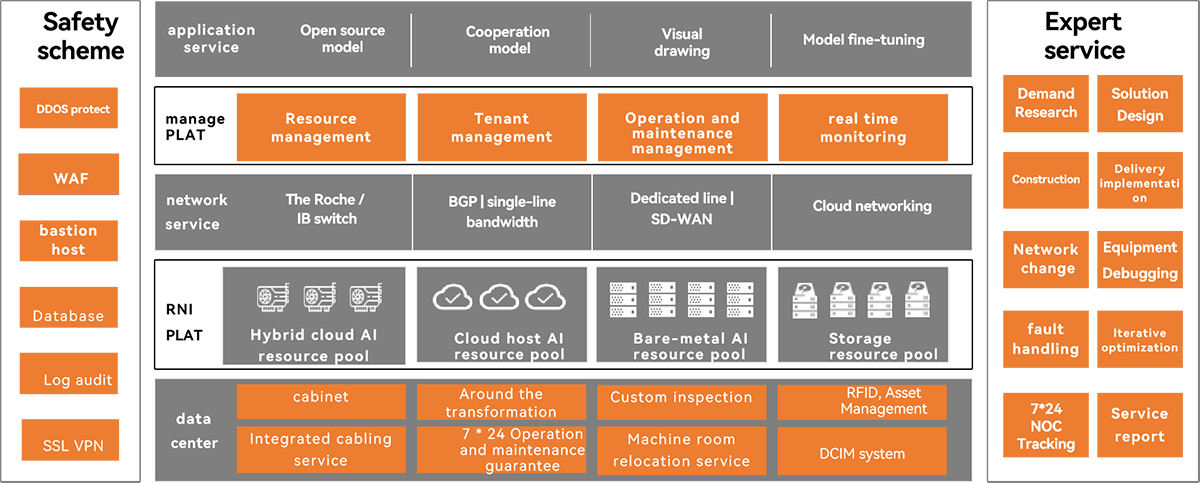

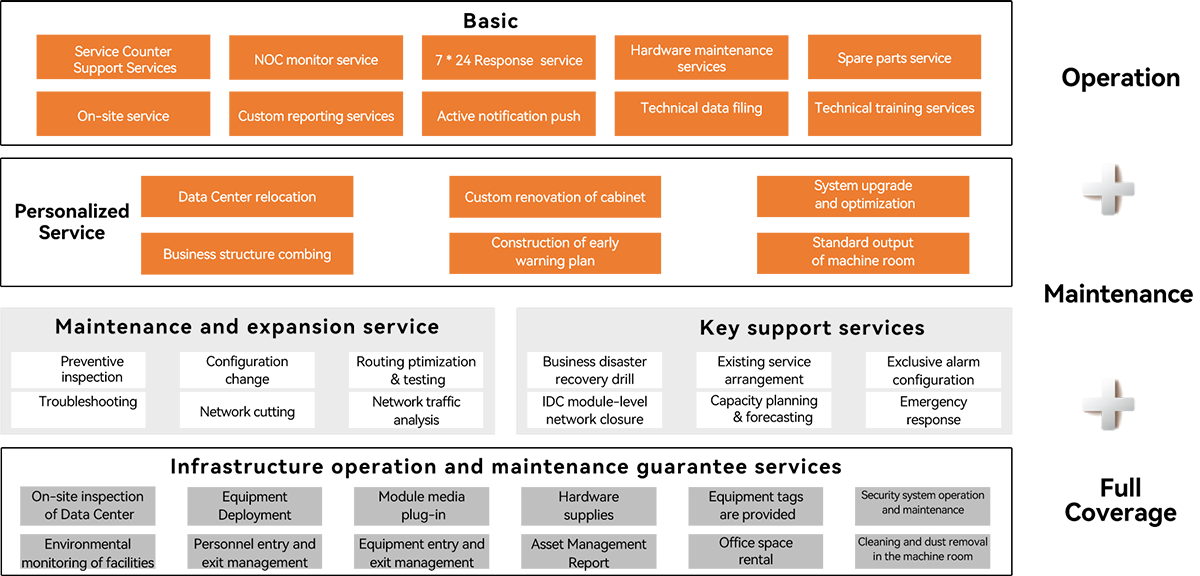

AIGC solution panorama

Our AIGC solution is designed to offer a comprehensive suite of product capabilities, encompassing data center operations, resource platforms, network services, management platforms, and application services. This open, secure, and customizable solution empowers customers to maximize their current server resources while facilitating seamless integration with the public cloud for flexible expansion. The result is a significant reduction in costs and an increase in IT efficiency.

Furthermore, our data center hosting area provides users with a physically isolated environment, offering exclusive cabinets, networks, servers, and storage resources. This, paired with our robust security solutions and expert services, ensures uninterrupted user operations and peace of mind. Our AIGC solution is tailored to support the dynamic needs of businesses, ensuring reliability, security, and scalability at every turn.

Digital Globe AIGC Infrastructure solution architecture

· Hybrid management provides logging, scheduling, and public network access capabilities

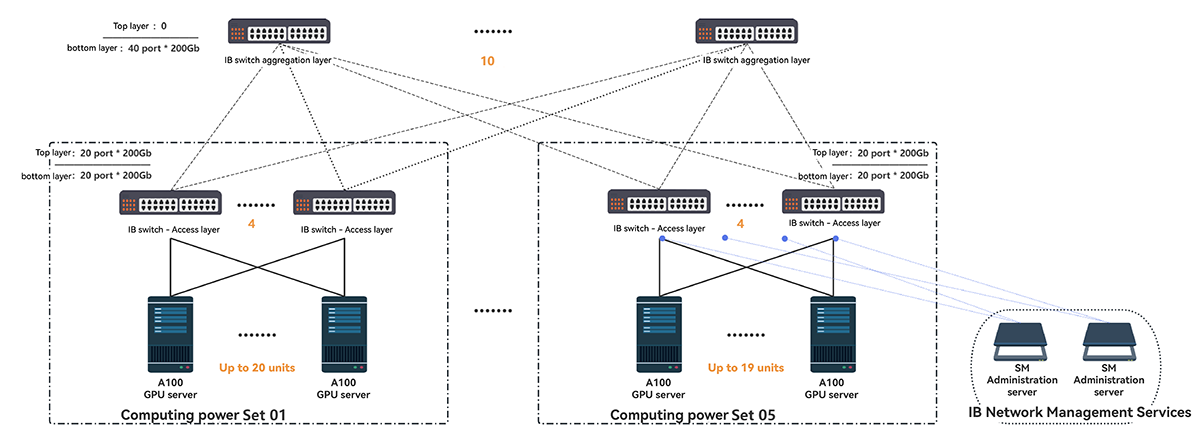

IB network layout

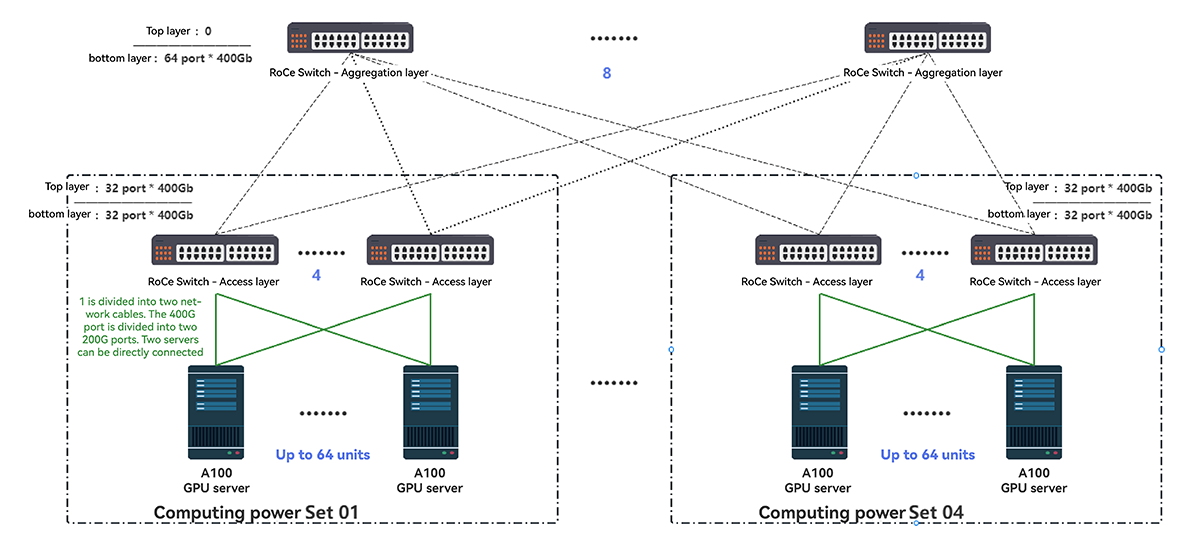

RoCE network layout

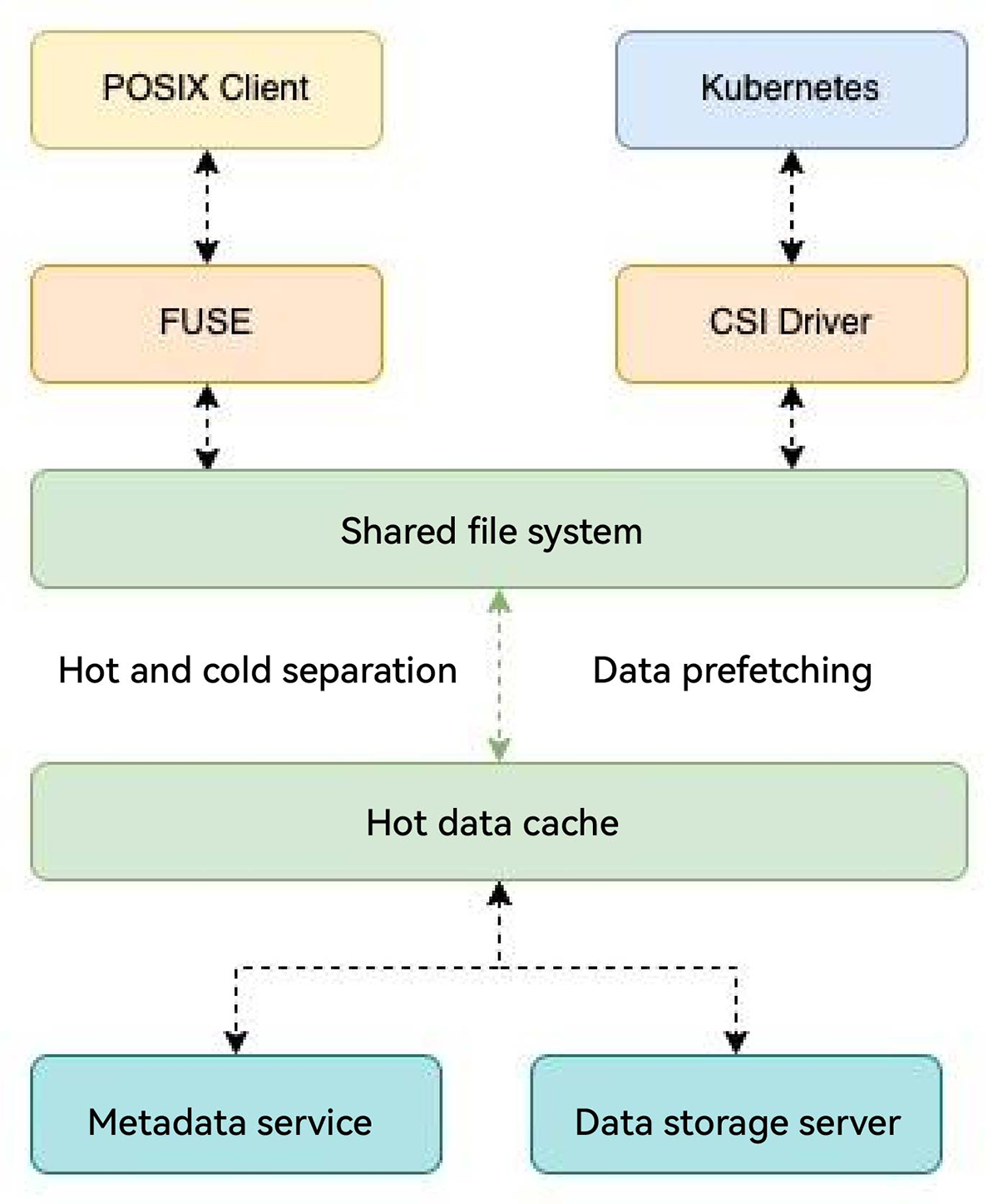

Storage solution

·Option:

Adopt cold and hot separation scheme, hot data is cached on GPU server and the cold data is stored by objects. maximize the idle CPU resources of GPU server to save cost.

·Advantages:

1. Hot data cache in the GPU server NVME disk to maximize the computing force performance by pre-extracting.

2. Provide FUSE and K8S CSI, and use the mode to facilitate computing power scheduling.

Partnering with DGMY

Choosing DGMY means embracing innovation and sustainability in the digital age. Our services are at the forefront of technology, designed not just to meet the current needs of our clients but to anticipate and shape future demands. As we continue to explore and expand the boundaries of digital technology, we invite you to join us in this journey of discovery and excellence. Together, we can create a more connected, sustainable, and innovative future.

The latest news, articles, and resources, sent to your inbox weekly